From Chief Security Officer to Chief Trust Officer

Why technology needs human-centred leadership - and how artificial constraints and calm technology can restore trust in our digital world

In my years working in cybersecurity - investigating major data breaches, building detection systems, and watching organisations struggle with digital threats - I've learned something counterintuitive: our biggest security problem isn't technical. It's trust.

We have spent decades building what I call "confidence-based security" - systems designed for predictable inputs and outputs, controlled environments, and compliance checkboxes. But confidence isn't enough anymore. In our complex, interconnected world, what organisations really need is trust. And trust requires a fundamentally different approach to how we think about technology, people, and leadership.

Beyond security: Trust as modern currency

Traditional security thinking assumes we can engineer our way to safety through controls, policies, and restrictions. This works (more or less) in simple systems, but breaks down in the complex reality where humans and technology intersect. Real work happens through shortcuts, adaptations, and behaviours we never planned for - what I call "desire paths" in our digital landscape.

The same human behaviour that leads to security incidents is often the same behaviour that makes organisations effective in the first place. There's no conscious moment where someone decides "today is a good day to get hacked." People are trying to get work done, connect with others, and solve problems, based on their knowledge and understanding at that specific moment in time. If our technology fights against these natural patterns, we have already lost.

This realisation led me to propose that organisations need to evolve beyond Chief Information Security Officers to Chief Trust Officers - leaders whose mandate extends far beyond preventing breaches to actively building trust in how technology serves human needs. And with that extended mandate, I think we could also focus more on not only protecting technology against people, but also people against technology. Or in other words: why only spend a lot of time and effort to keep our business safe from criminals, when our own products and technology might also not be entirely trustworthy for our customers…?

So what would trust-centred technology actually look like? Over the past few years, I've been experimenting (on a personal scale) with answers.

Experimenting with calm technology

The E-ink Family Calendar

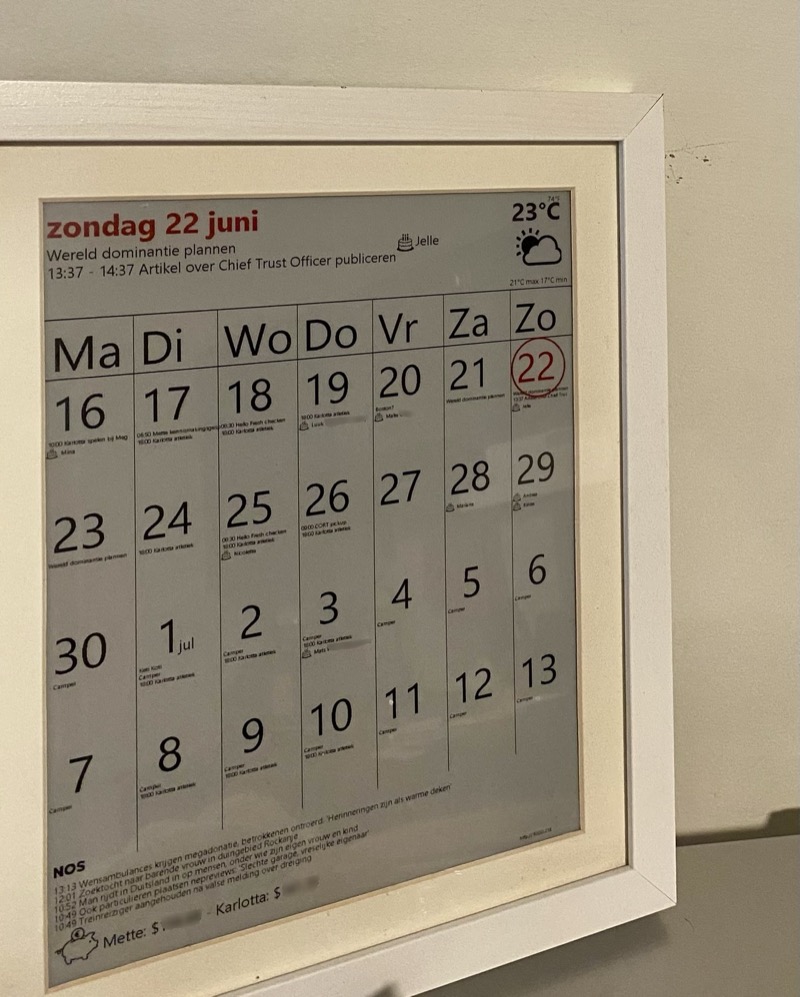

Standing in our kitchen is an e-ink display showing our family's shared Google Calendar. It displays four weeks ahead, today's weather, a few national news headlines, and - perhaps most importantly for our kids - their current pocket money balances from their bank accounts.

This simple device eliminates the need for family members to constantly check multiple apps, creating what researcher Mark Weiser called "calm technology" - computing that's there when you need it but doesn't demand attention. This concept, developed alongside John Seeley Brown and Rich Gold at Xerox PARC, and more recently championed by designers like Amber Case, stands in stark contrast to today's attention-hungry interfaces.1 No notifications, no infinite scroll, no algorithm trying to capture engagement. Just the information we need, when we need it, in a format that does not force everybody to move to their individual devices.

The paper card music jukebox

A few years ago, I became frustrated with a paradox: Spotify gives us access to virtually all music ever recorded, but I felt that this infinite choice was actually diminishing rather than enhancing our musical experience. In addition, it requires a digital screen to access it - and I was reluctant to hand my young children an iPad just to play music.

So I built something different: a music player that uses a barcode scanner (like those in supermarkets) and physical paper cards about the size of a credit card. Each card shows the song name, artist, artwork, and a barcode. Scan a card, and that song plays - the whole song, without the ability to skip. You can queue one additional song, but no more, because complexity kills the magic.

To add new music to the collection (a growing pile of cards…), you must consciously decide to print a new card. This artificial constraint recreates the excitement we used to feel when buying a single or cassette tape - that sense of ownership and anticipation that infinite streaming has eroded.

Constrained playlists

Building on this theme, I created an app for making playlists with artificial limits: maximum 90 minutes (the length of a cassette tape) and no more than four playlists in total. The goal was to recreate the "road trip soundtrack" phenomenon - where limited choice creates deeper emotional connection and meaning.

When you could only fit a few CDs in your car's glove box (or worse: that one cassette tape that had gotten stuck in the radio…), those albums would become the soundtrack of your journey, listened to repeatedly until every song was associated with specific parts of the trip. Infinite choice may seem like freedom, but it often leads to shallow engagement, decision paralysis and constant skipping to find something "better".

The Technology Trust crisis

These small-scale experiments reveal something crucial about our current relationship with technology. The tools designed to give us infinite options and constant connectivity are often making us less happy, less focused, and less intentional about our choices.

Consider smartphone addiction among both children and adults - a crisis that could have been prevented by leaders asking different questions during product development. Instead of "how do we maximise engagement?" what if teams had asked "how do we build tools that people can put down?" Instead of "how do we capture attention?" what if they'd focused on "how do we serve people's actual needs?"

This isn't just a technology problem; it's a leadership and societal problem. When product decisions prioritise business metrics over human wellbeing, when growth trumps trust, we create systems that ultimately undermine both user satisfaction and long-term business sustainability.

The business case for trust-centred design is still being written, but early indicators are promising. Companies that have built reputations for respecting user autonomy often command customer loyalty that translates to sustainable growth - though measuring this directly remains challenging in our engagement-obsessed metrics landscape.

Social media algorithms that optimise for engagement rather than truth, dark patterns that trick users into subscriptions they don't want, interfaces designed to create dependency rather than empowerment - these aren't inevitable consequences of digital progress. They're choices made by leaders who weren't asking the right questions.

The Chief Trust Officer vision

This is why I believe organisations need to move beyond traditional security thinking toward explicit trust leadership. CISOs have traditionally focused on protecting technology against people - securing systems from human error, malicious insiders, and external threats. But we need to broaden this scope to also protect people against technology - not just from criminals and malware, but from the manipulative design choices we ourselves create. I think there is a clear imperative looking at past and current systems and applications - but especially think that the current wave of AI-powered ideas warrants even more thinking about how we interact with novel technology.

Whether this evolution takes the form of a dedicated Chief Trust Officer or becomes an explicit part of the CEO's mandate, someone needs authority over the fundamental question: does this technology decision build or erode trust?

This leader would evaluate every product decision, system design, and user interaction through a trust framework:

- How does this benefit our business long-term?

- Does this respect human psychology and wellbeing?

- Are we creating tools people can trust and control?

- What artificial constraints might actually improve the user experience?

The financial benefits of this approach are harder to measure in traditional metrics, but may include lower customer acquisition costs through genuine word-of-mouth, reduced support burden from clearer interfaces, and differentiation in increasingly crowded markets. Meanwhile, the risks of manipulation-based business models are becoming clearer: regulatory backlash, talent retention challenges, and eventual customer fatigue with exploitative design.

This isn't just about security and privacy - though those remain crucial. It's about the broader question of whether technology serves human flourishing or exploits human weakness.

Building Trust through constraints and transparency

My experiments with artificial constraints reveal two important principles: limitations can be features (not bugs), and transparency builds trust.

Each of these small projects is completely predictable and observable. The e-ink calendar shows exactly what it shows - no hidden algorithms deciding what you see. The paper card music jukebox does exactly one thing when you scan a card - it plays the song, completely. The constrained playlists have clear, visible limits - 90 minutes, four in total.

There are no surprise features, no data collection you didn't expect, no algorithmic "improvements" that change the experience. You know exactly what the system will do because the constraints are visible and unchanging.

This predictability is the opposite of most modern technology, where algorithms constantly shift, features appear without warning, and people never quite know what their devices are doing behind the scenes.

Your move

I can only hope the shift from engagement-driven to trust-driven technology is inevitable. The question is whether your organisation will lead it or be disrupted by it.

Business leaders: Start asking trust questions in product decisions. Experiment with beneficial constraints.

Consumers: Support companies that prioritise transparency over manipulation. Make conscious choices on your own technology use.

Technologists: Build predictable tools people love and enjoy using, yet also can put down.

Everyone: Demand technology that serves human flourishing, not just business metrics. That is doing its magic without stealing our attention. That is capable of keeping cybercriminals out, without keeping its paying customers locked in.

Trust isn't just nice-to-have anymore - it's the currency that determines which organisations will stay ahead in an era where people are tired of being products instead of customers. The companies building this future aren't sacrificing profitability for principles - they're discovering that sustainable business success and human-centred design are not just compatible, but mutually reinforcing.

What trust-building experiments might you try? The world needs leaders who understand that sometimes the most innovative thing you can build is technology that knows when to step back. In my BlackHat talk in 2016, I shared some of my other ideas on how we need to incorporate design thinking and human-centered design into cybersecurity.

1. See calmtech.com for the principles of Calm Technology